Designing IoT solutions to get the most out of your data

Analyzing and applying automated decision-making on real-time data is widely understood to be a critical enabler of digital transformation. The fresher the data, the more potential it has to create opportunities, increase profits, improve customer satisfaction and avoid problems. From the start, enterprises need to first identify the business requirements to help decide how to handle different types of IoT data.

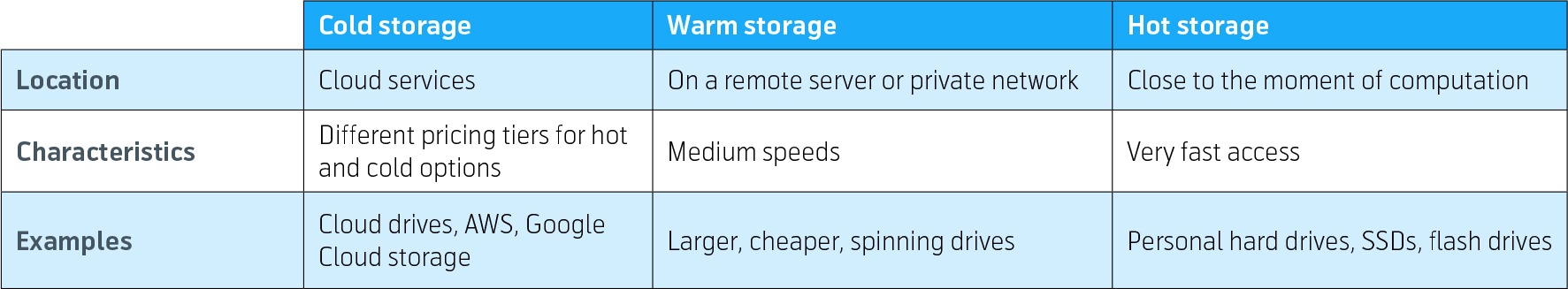

Regardless which industry you operate in, your data has a temperature. Identifying its temperature will help you know how to manage it. The classifications are described as hot, warm and cold.

Hot data is frequently accessed on faster storage, warm data is accessed occasionally and stored on slower storage, and cold data is rarely accessed and stored on even slower storage.

In general, each type of storage has an associated cost, which is dependent on several factors, such as the type of storage or the size of the storage devices. Costs are also influenced by environmental factors, such as rack space, the amount of power required, and recovery capabilities.

Costs can further be impacted by additional features, such as the amount of memory cache and the use of certain algorithms to assist with performance, error checking or error correction.

Identifying the business requirements is crucial in helping to decide which type of storage to use for different types of data where the temperature of the data is an integral part of the decision-making process.

Below, we set out five key considerations organizations need to take into account when assessing which data strategy they will need as well as the resulting requirements each element puts on networks, connectivity, storage, computing resources and hardware.

Topics covered in this guide:

- Five key considerations when designing IoT solutions

- Hardware requirements and costs

- Computing resources and scalability

- Connectivity and latency issues

- Storage and complexity

What is hot and cold data?

Describing data as part of a temperature range may seem out of place but it’s a good means to explain the attributes of different data. There are no formal, global definitions for hot or cold data but hot data is usually referred to as data you have instant access to. It can be real-time data, but it can also be historic data that you have not yet stored away in an archive. Cold data is data that has potential value, but accessing and analyzing it is seldom required. Cold data can therefore be stored or processed in a way that is not time critical and this means you can manage it in a more cost-effective way.

Hot or not?

The usage of the terms hot and cold to distinguish between storage options originates from the physical way data has traditionally been stored. Items located closer to the data center were accessed more regularly, and were located in storage facilities that were hot.

Items located further from the data center had slower loading times, so it became the place to store data that required less frequent access. This type of storage was handled differently from hot storage, typically using old drives that did not generate the kind of heat the other storage facilities created, hence the name.

Many organizations fall somewhere between the extremes of hot and cold, and operate utilizing warm data. There are use cases in which cold data can be warmed up by temporarily moving it from one storage medium to another, with the positive effect of making your analytics faster. Similarly, hot data can be made cooler by storing it on a cheaper medium for later batch processing. For some, hot data is equal to real time data or near-real time data and for others it simply means they can query the data and get results within a few seconds, even though the data itself may be a few days old.

An extreme example of hot data would be taking data from a continuously monitored sensor, analyzing it in as close to real-time as possible and then enabling an automated decision to be made. This has huge advantages in product responsiveness and predictive activities such as purchasing and sales, however hot data solutions can be much more costly than cold data systems.

For many applications cold data is more than adequate. A water meter for example might only need to communicate consumption to enable billing. This can be stored and collated for use at the end of the billing cycle to issue a bill. There’s no need to apply in-stream analytics or time critical processing. However, if the same meter is being used to detect leaks or anomalies the IoT data strategy may need to become warmer to allow the information to be acted on more rapidly. Therefore, an enterprise’s IoT analytics strategy, must be tailored to support the business case and overall strategy.

IoT Data Analytics: Unlock the Full Potential of Your IoT Project

Technical implications

Any data that you might need to access immediately must be located in hot storage. This can include data that is, for example:

- Known to change

- Used for query purposes

- Used in any ongoing projects

Cold storage is meant for data that is rarely used but needs to be retained because of, for example, record keeping, compliance or legal reasons.

New tech such as the Cloud, is transforming how we perceive data computation and data storage. Regardless, the terms hot and cold are used to describe how accessible storage is. Fast and comparatively easy accessibility is hot. Slow and difficult accessibility is cold.

To identify whether your IoT data is hot, warm or cold, you can look at your technical implementation to assess the potential it has to result in actionable insights and under which time frame it can impact the outcome. For example, a security system collecting historical data about unauthorized break-ins will help inform a system redesign to prevent future break-in attempts, but the data is even more valuable to detect unauthorized access when it is ongoing, and when you still have time to stop it. The technical implementation and the value of the data are interlinked parts of the equation and in a well-designed system the costs are directly proportional to the business benefit.

Implementation

Five key considerations when designing IoT solutions

Every element of an IoT implementation needs to be fine-tuned to support the overall requirements of the business case. Here we examine some of the most significant factors to be considered when designing connected products.

1) Hardware requirements and costs

In every business, the IoT devices used, including technology such as meters, trackers and sensors all need to accommodate specific onboard hardware that supports whichever data approach has been selected. This ranges from on-board computing power to perform in-device data processing, to communications modules to enable data to be transmitted. The more complex the requirement, the higher the cost of the onboard hardware and the greater the expense for the purchaser or operator of the device. The cost of a 5G module, for example, is far greater than the cost of a 2G or narrowband-IoT (NB-IoT) module and there will be complexities associated with antenna mounting and accessibility to consider at the device design stage. Where to locate the computing power, cooling as well as energy supply requirements is also a factor that influences the design and overall costs. These hardware decisions should be considered from the beginning because retrofitting and upgrades may prove expensive. From the data-as-an-asset perspective, choosing an IoT solution based on constrained hardware will limit the business potential of your solution.

2) Computing resources and scalability

The hot or cold data decision also has a substantial impact computing costs. Computing is relatively low cost when performed by centralized servers in a traditional data center or the Cloud, although this requires more network traffic in order to centralize all the data and therefore has higher traffic costs. On the other hand, computing resources at the edge is also expensive in terms of costly device hardware. A further downside of edge computing is that it normally does not have access to data from other devices, which you have in the centralized data scenario.

As an example, a heavy machine that predicts upcoming failures, would be much more accurate if it could learn from data from other machines. However, that would require that all data from all machines be shared between each other, and hence will require even more network traffic than for the centralized data processing case. From an operational perspective, this is unlikely to be a viable scenario as the processing and network capacity on the individual devices cannot be efficiently scaled with an ever-increasing size of IoT fleet deployed globally.

An often-adopted best practice for the predictive failure use case, is to centralize all data, build and train models in the cloud on all data and then deploy the model back to the device. This approach is a good way to balance computing and network traffic costs.

However, a lot of this is dependent on the nature of the use case. A recommendation is to at least consider hot data or real-time actions, as the business value of it has a significant potential to cover the cost of computing resources regardless of if the critical computing capacity is in the Cloud or at the edge.

Watch the recorded webinar

3) Connectivity and latency issues

The connectivity and choice of radio technology is a very important aspect of your IoT solution. There is an ongoing technology shift from 2G/3G/4G into LTE-M, NB-IoT and 5G that requires careful decisions to ensure future compatibility. Selecting the most suitable connectivity technology is one of the critical decisions that enterprises need to make in their IoT launch strategy as reliable connectivity is a key component in an IoT solution. Explore this topic further in our Connectivity Technologies for IoT report.

It is impossible to violate the laws of physics. Intense computing will drain the battery, and the LPWA communication standards has bandwidth and latency limitations. Such low-power devices are mainly for observing and reporting use cases, where communication only happens occasionally.

This implies that hot data scenarios cannot be cloud or data center dependent, as the data from the internet-of-things will not be hot when it arrives to the computing resources.

Furthermore, the battery constraints that normally is associated with LPWA3 deployments also poses limitations on the amount of intelligence and computing power at the edge. A typical LPWA use case is the automated meter that just reports consumption.

A special case is the electricity meter that has access to a power supply and hence can be empowered by more computing capacity. However, the radio technology of LPWA will still have limitations on network latency and bandwidth so it cannot be used for ultra-hot use cases like load balancing the power supply distribution etc.

In the other end of the spectra we have the promise of ultra-low latency and high bandwidth 5G networks. This fits better to response critical IoT solutions like self-driving cars or drones. Nevertheless, network coverage cannot be guaranteed which means that logic and processing of data must still be managed at the edge in the event of poor network coverage.

The connectivity and latency should obviously be well aligned with your use case, but it is important to acknowledge that it has implications on the data-as-an-asset perspective and whether your IoT data will be hot or cold.

4) Storage and complexity

The cloud revolution has led to the perception that storage is almost free, but this is heavily dependent on the storage medium or power of your data warehouse.

Cloud providers offer cheap storage on magnetic discs, that can be almost infinitely scaled – this principle is used in the Data Lake concept where you collect data from many sources and store it for later use.

The IoT Data Lake is a cold data solution, because when you consume or process the data it is normally old and moreover, it has quite long query and search response times.

Thus, the cold data and the relatively long retrieval times of data is therefore not useful for automation. The most common use cases of Data Lakes are to train AI and ML models on historical data, batch reporting on what happened during a past period or retrieval of historical records when it is not time critical (e.g. finding forensic evidence to prepare a process against a hacker that penetrated a system some time ago).

A completely different use case is when you need to always know the current state of your devices in your IoT fleet. A simple case would be connected door solutions that continuously report if they are open or closed and the number of people that pass through the door in either direction. This information would be critical to control airflow, temperature or the number of people in different areas of a building. In the case that this would be information needed in real time (hot data), then the data from the doors must be updated centrally in real-time. Storing information about events and states in an IoT Data Lake or even a data warehouse is usually not optimal because of the transaction volume and time it takes to update and store each event.

With a non-optimal storage medium, the amount of transactions can easily be queued up and cause latency in the information flow that is critical for the control of automated processes. The consequence is that the control system always acts on wrong and/ or outdated information (e.g. pause heating when temperature drops rather than the opposite). These kinds of solutions usually require an in-memory solution that can store, update and retrieve data in sub-milliseconds. In-memory storage solution is however orders of magnitude more expensive than magnetic discs.

The recommendation is usually to find a balance between hot and cold, where current states is stored in-memory, but the history of state changes is stored in an IoT data lake. Then you can train models for automation based on vast amount of historical data from the cold data lake, but deploy it to your hot real-time data flow for optimal control of your overall system.

5) Future flexibility

Many use cases simply do not need hot data processing capabilities. An application such as a pet tracker, for example, only needs to be able to perform geolocation that is visualized in a smartphone app. It’s simple, there’s no automation and no business logic required. This application has a relatively high data transfer cost because the information needs to be communicated continuously if a pet goes missing and at high speed to give the owner the information it needs.

However, if the solution should be upgraded with business logic like geo-fencing or alert/notifications, this comes with computing needs that must be hosted either in the device, the cloud/backend or in the smart phone application. Therefore, you need to decide on the level of flexibility you require upfront, because a simple device will likely not be able to have edge capabilities.

Another example is a smart meter. Initially it can be a dumb domestic electricity meter that simply counts consumption and presents that info at preset intervals to enable billing. However, over time, it needs an upgrade path as electricity providers are deploying smart meters that will have active lives of 20 years or more. During that time, it is expected that homeowners will generate their own renewable energy and sell it back to the grid and at the same time consumption profiles will become more complex with electric vehicle charging and other intensive use cases coming to market.

Intelligent smart meters therefore need a far more real-time capability and electricity companies need timely information on how much energy a home is generating in order to ensure the grid does not become overloaded or, on the other hand to make sure, there is enough supply to meet user demand in the area. This complex use case involves collection of hot data, potentially processing of it within the device plus the robust, low latency of the information so it can be acted upon automatically. Finally, the data from the internet-of-things could also be stored for future learning and to educate automated systems.

In this scenario a power company that only rolls out a LPWAN-connected meter with limited processing power may not have access to the low latency connectivity it needs, nor the device intelligence required to make a strong business case out of hot data. In addition, the cost of upgrade will both delay time-to-market and potentially make the future business case unsustainable when, for a relatively contained additional initial cost, the device could be shipped in a way that is prepared for the future.

Conclusion

Using the data-as-an-asset perspective will help you design your IoT solution in an optimal way.

There are a number of trade-offs like, cost/ complexity, time-to-market and life-span of your IoT solution that you need to balance in the design process. In the end, the ability to act on your data is what will drive value.

A hot data scenario implies you can act fast, e.g. avoid costly failures before they become irreversible, but it normally comes with the higher cost of onboard hardware, computing and/or connectivity costs. The cold data scenario is usually considerably cheaper, less complex and therefore has a faster time to market.

Finally, the life-span of your IoT solution needs to be balanced with the upcoming technology shifts that will make your solution relevant or not to the market. Furthermore, your ability to support the future IoT use cases where data requirements may become significantly different is also key. Therefore, your IoT architecture should enable this evolution of new IoT use cases, without the need to replace key components.

In summary, data is constantly evolving and how you analyze and prioritize it plays a significant role in what will be best practice for your particular needs. Therefore, enterprises need to consider what their current and future requirements will likely be as soon as they start designing IoT solutions and examining the integral role IoT data can play in it.